VisionField is an image discovery thing where you don’t type a perfect query; you fly through a 3D field of images and steer with scroll + pan until something clicks. It’s a prototype to see if that “converge by judgment” feel beats endless grid scrolling, and whether the performance model holds up. Everything’s deterministic (keyword → seed → same world every time) and images are Picsum placeholders so I could iterate without touching real storage.

You scroll through depth; nodes you pass get recycled ahead so it never really “ends.” Right-drag pans. I gated hover/click to a small interaction band so you’re not accidentally hitting tiny far tiles. Filters don’t nuke the space; they re-weight it. Matches come forward, non-matches fade back. Feels like steering, not resetting.

The seed drives positions, depth layers, fake tags, and Picsum URLs. That made the experience debuggable and shareable (same seed = same session). Auth is Clerk: email/password with verification code, confirm-password on sign-up, and Google OAuth. Downloads are gated—if you hit Download while signed out, the panel opens and after you sign in the download completes so you don’t lose the click. The minimap is only available when signed in and is off by default when you’re not; no server-side download logging for now.

Performance

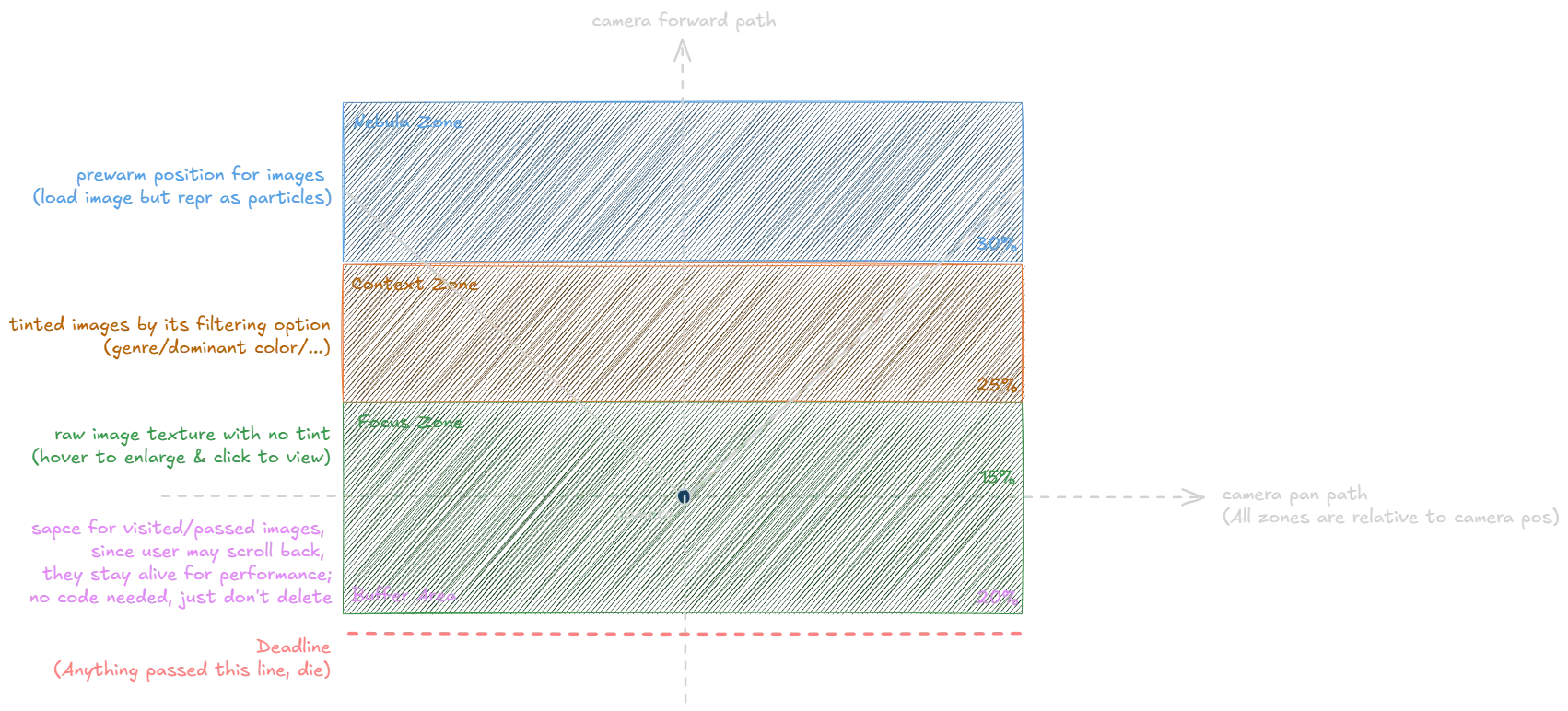

Performance is the interesting bit. I treated it like a real-time system: the space in front of the camera is split into bands (far = cheap particles, mid = tinted/partial, near = full resolution + interactable, behind = short buffer then recycle). There’s a focus budget per frame so only the closest N nodes get full treatment, and a LIFO texture queue with hard limits so memory doesn’t blow up. Billboards face the camera; opacity/scale come from distance + filter weight. One wart: movement is frame-based so it can speed-burst when FPS dips; should be (Delta t) with clamped timesteps.

Outcome

So far it’s a solid proof that exploration-by-judgment works and the pipeline stays stable when you push it. Next would be optional server-side download or exploration history (e.g. DB or Clerk metadata), server-side signed URLs if we add real assets, swap Picsum for a real source but keep the same SpaceNode shape, and maybe vector search for real tags instead of synthetic ones.